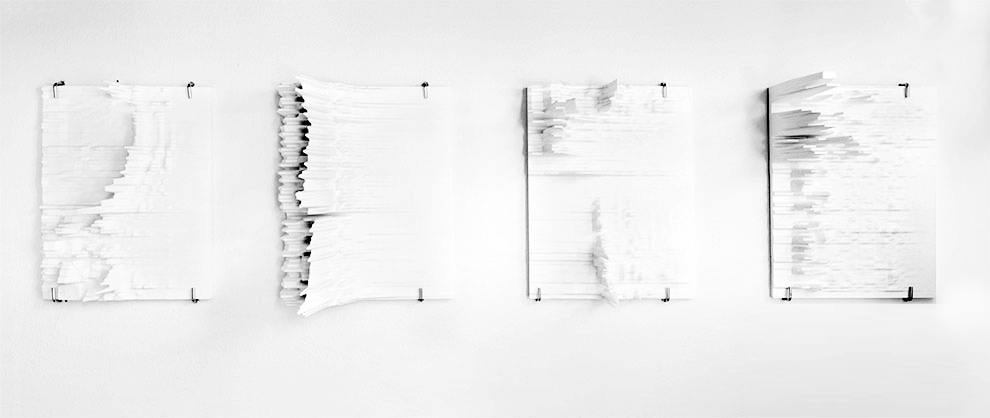

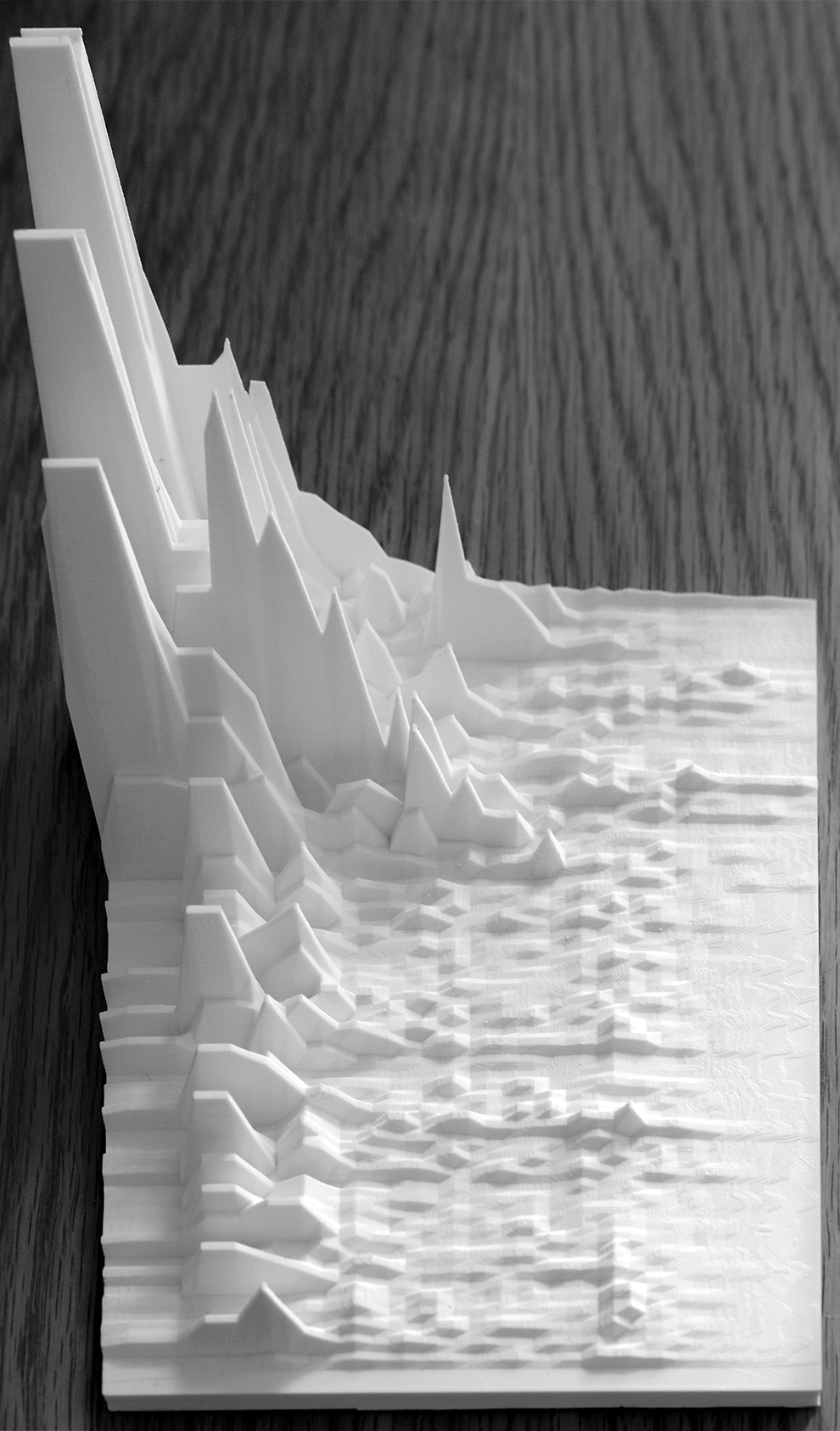

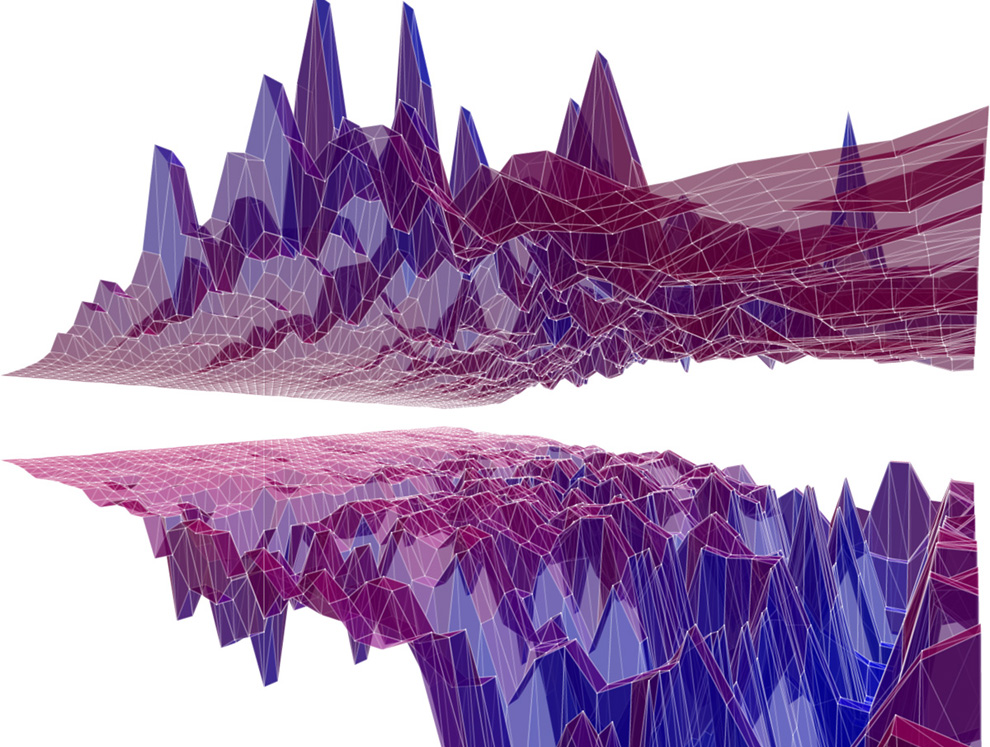

3DP – SOUNDWAVE

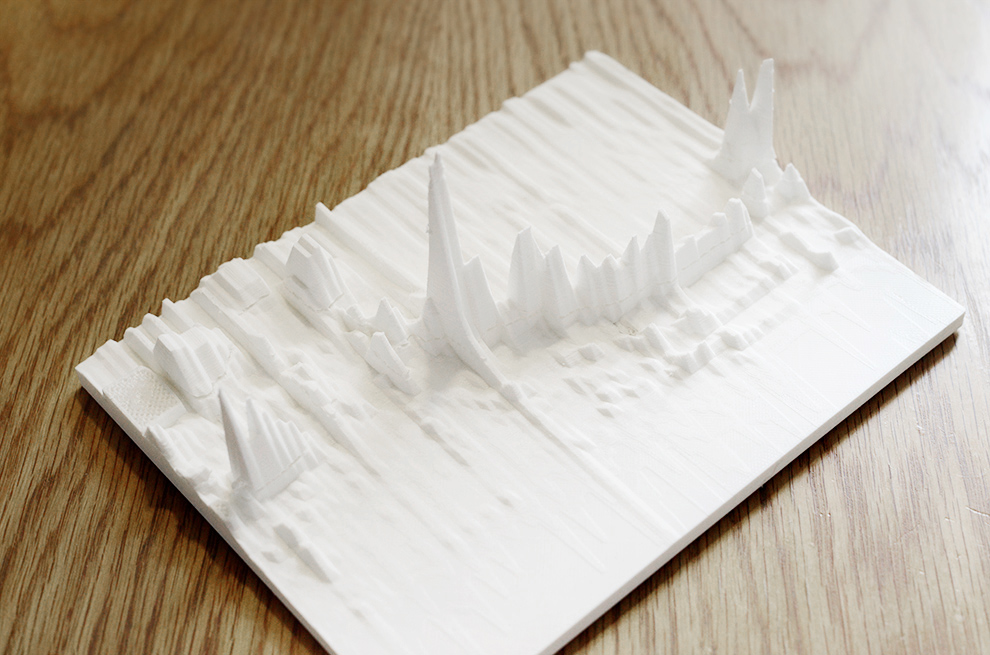

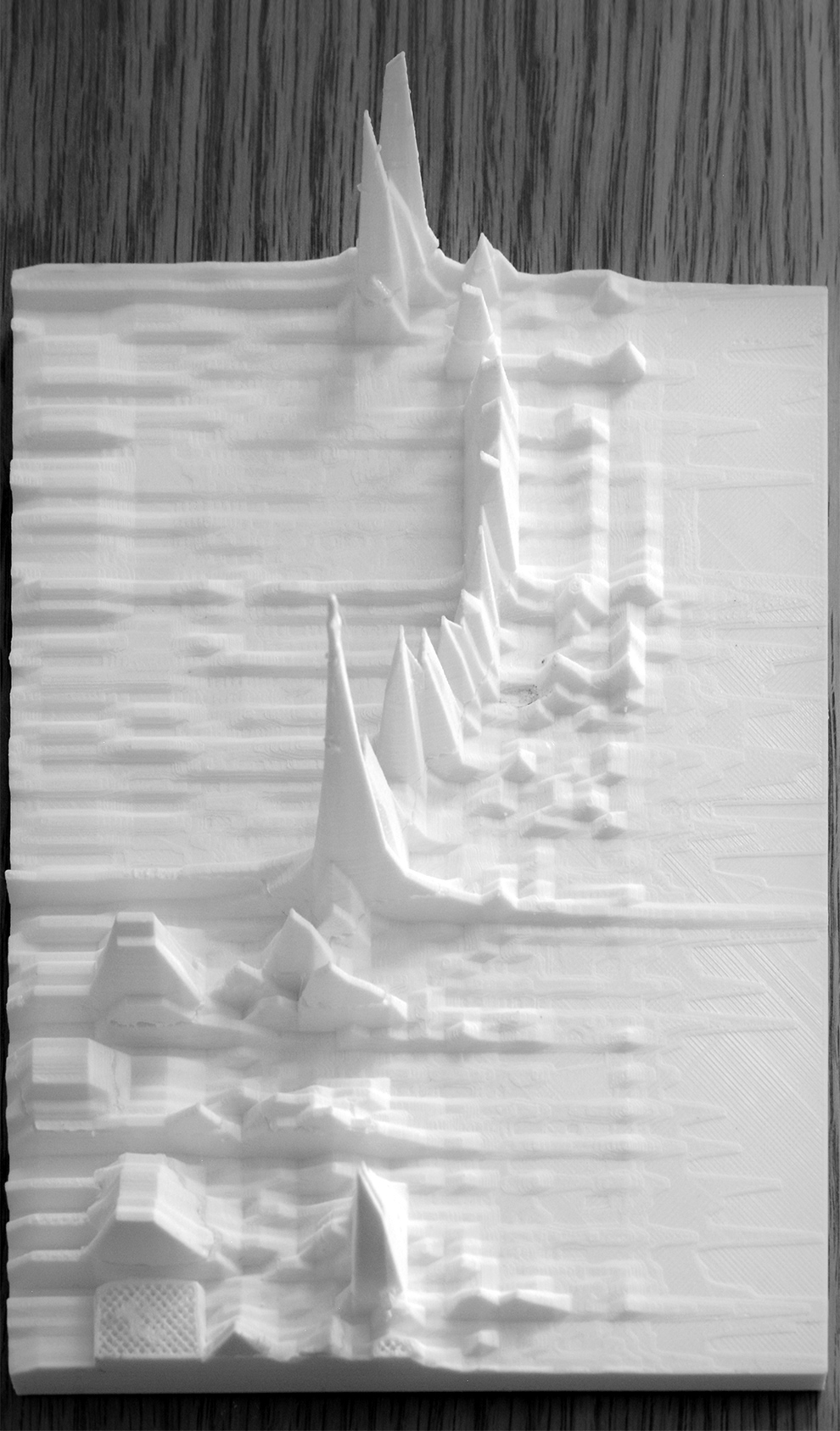

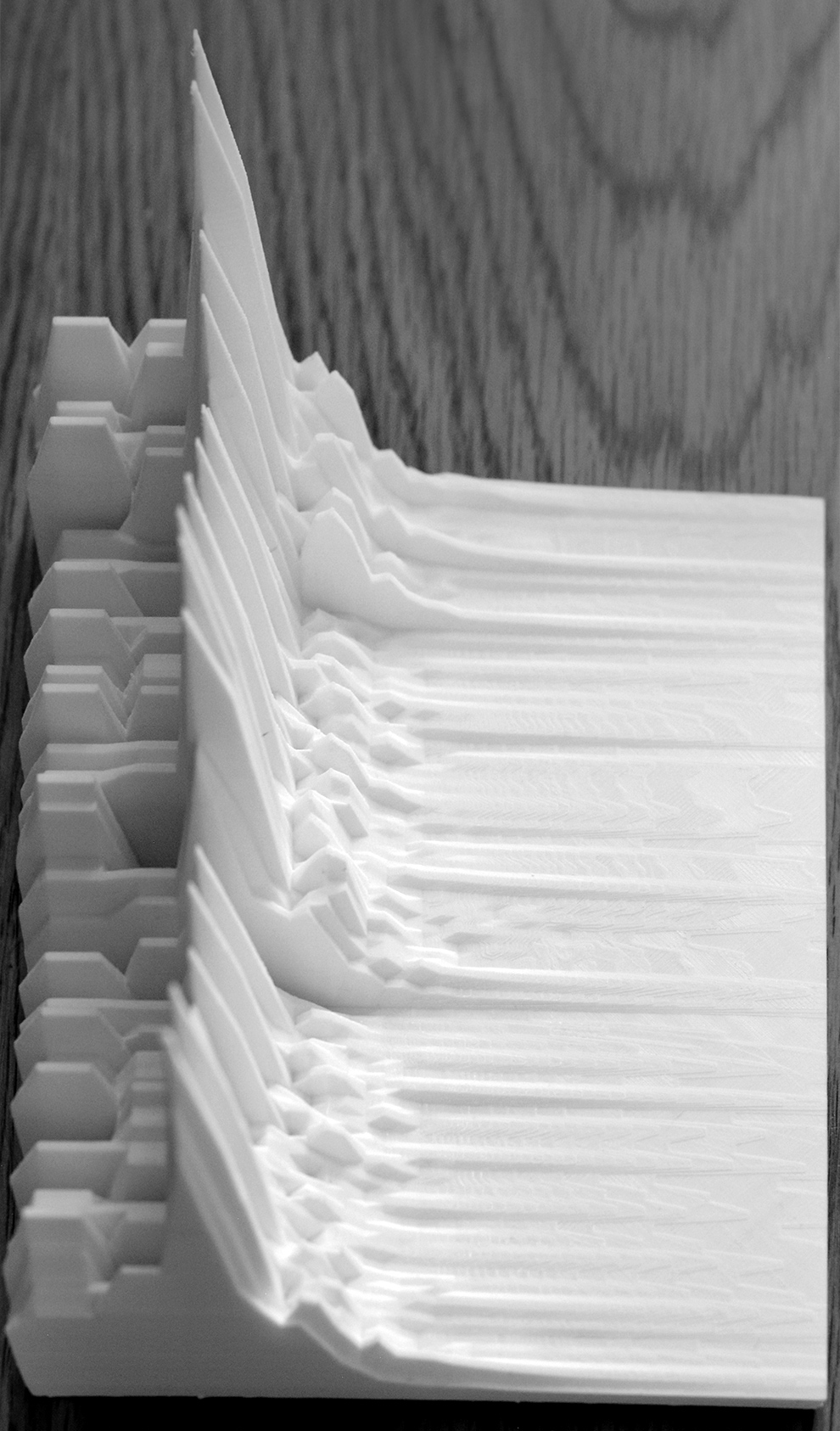

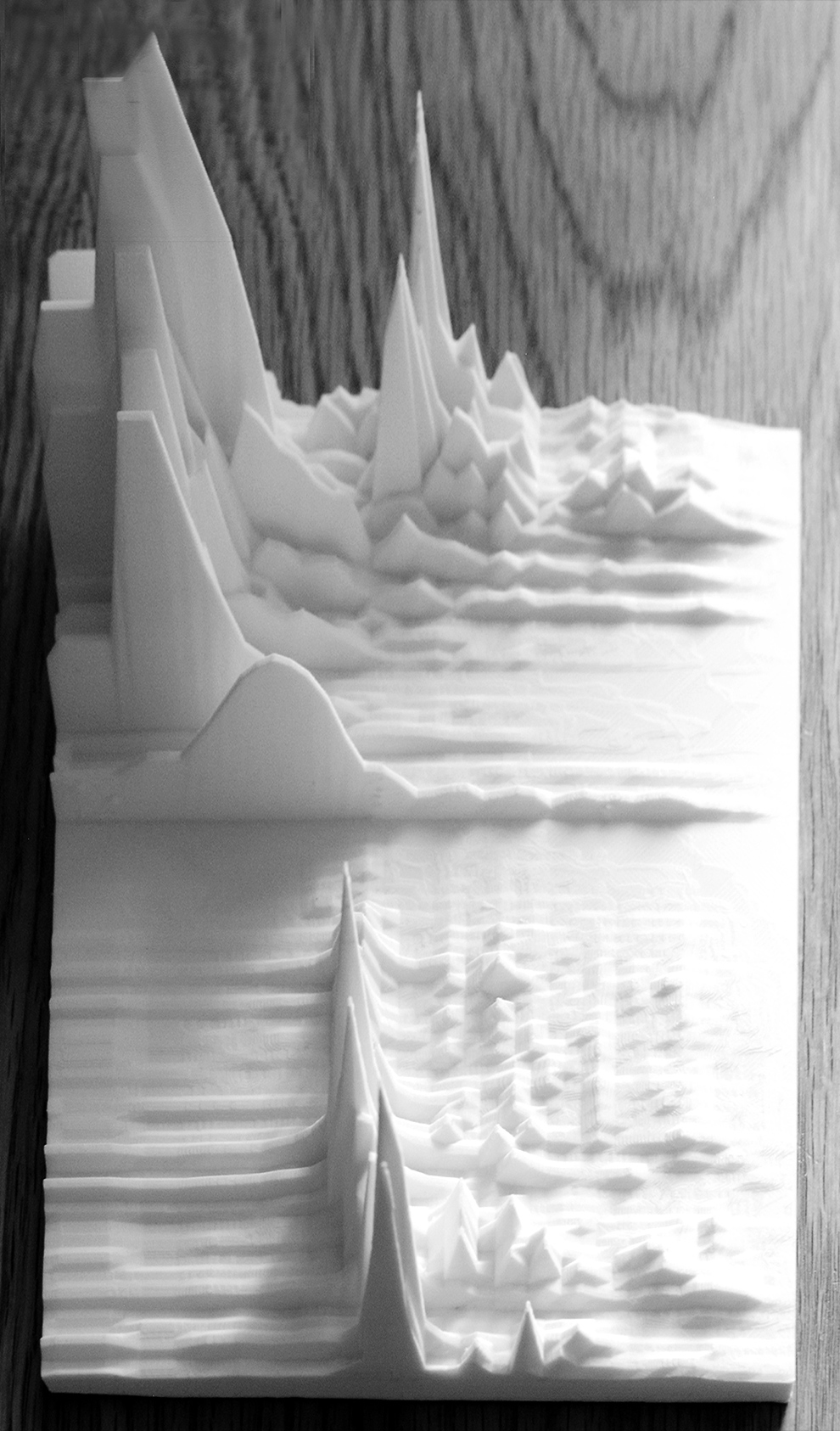

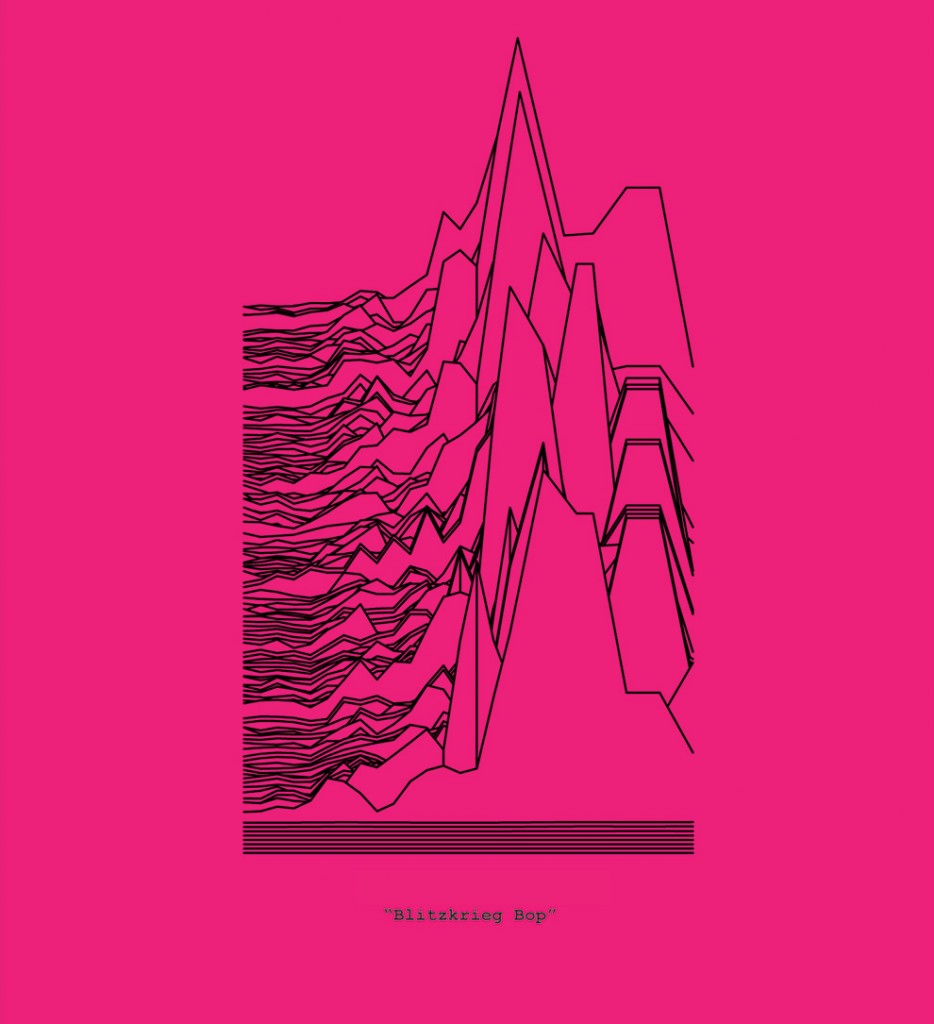

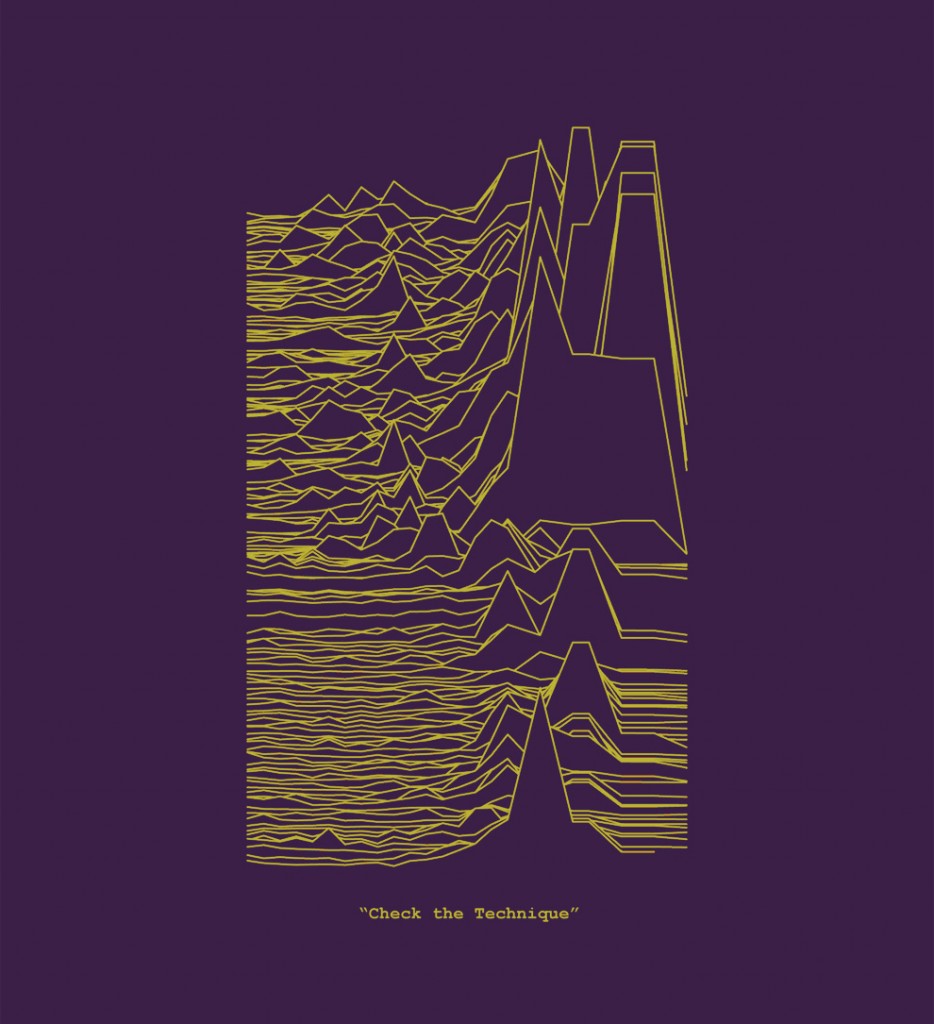

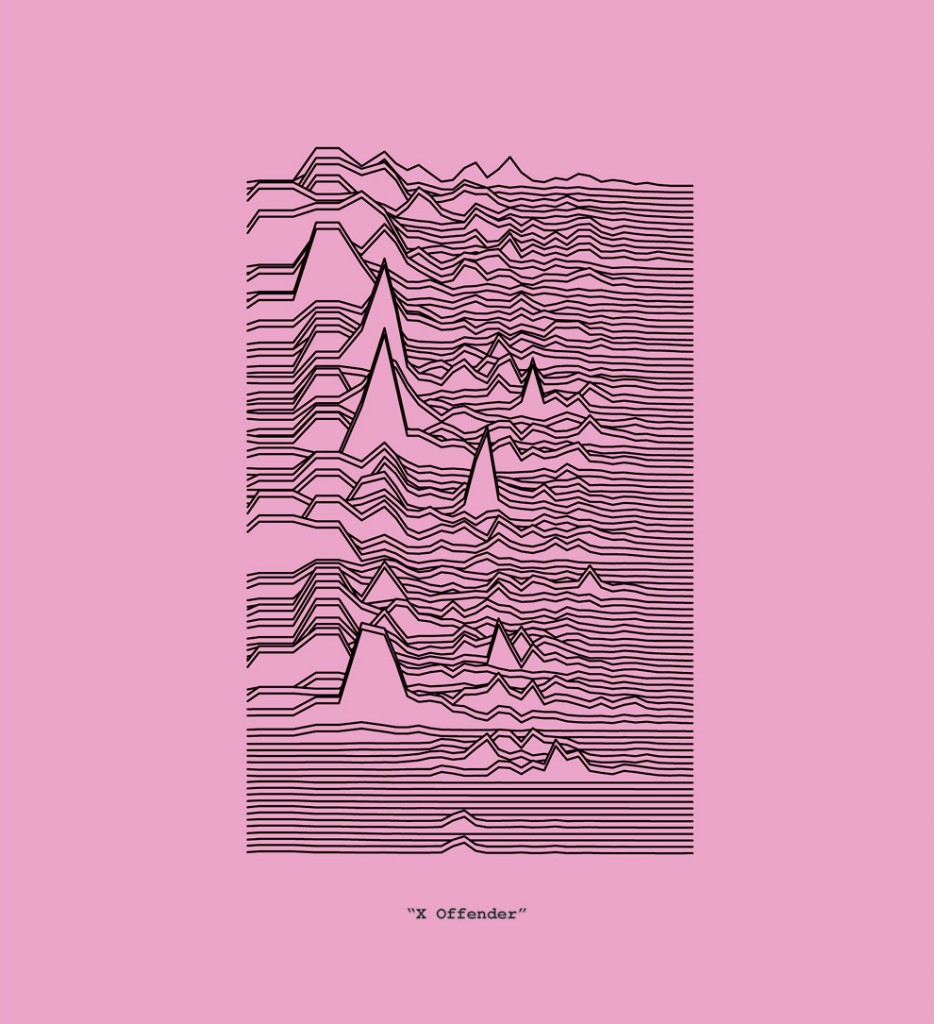

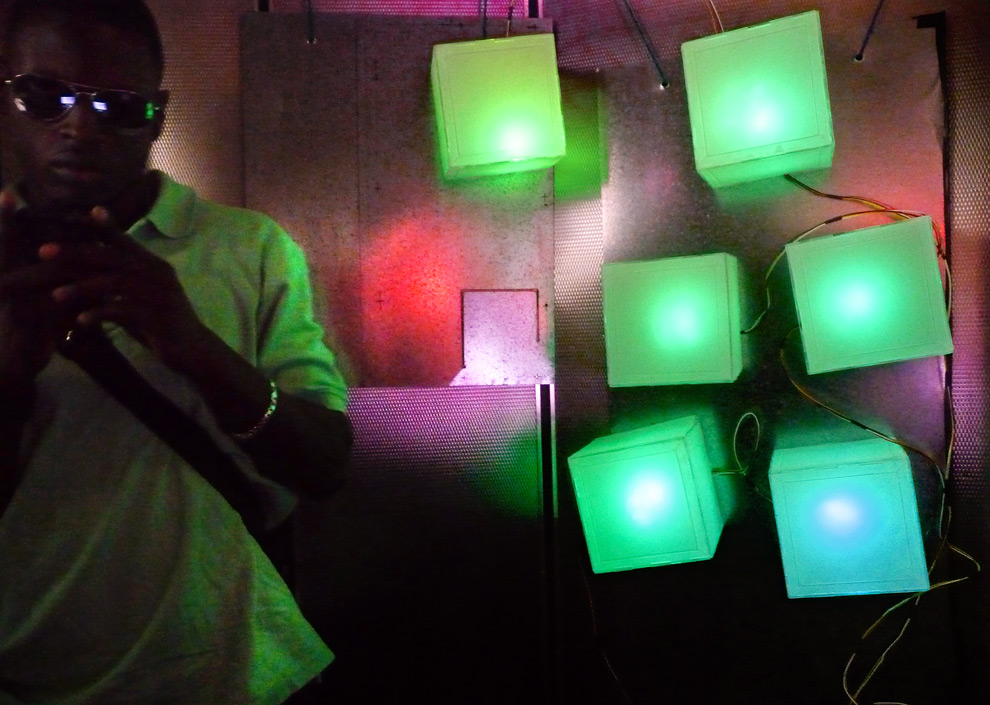

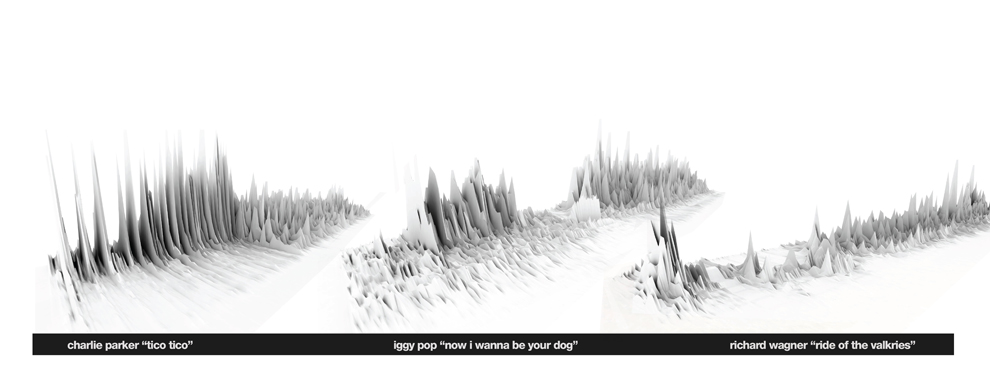

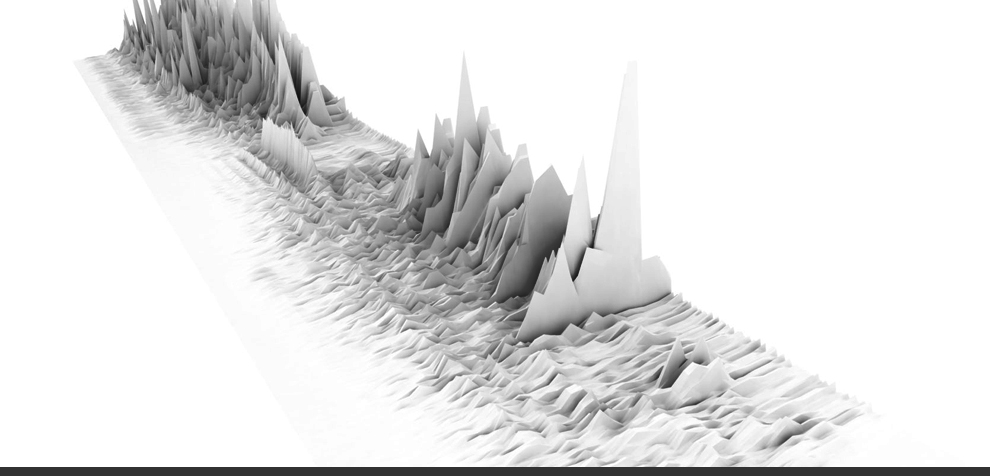

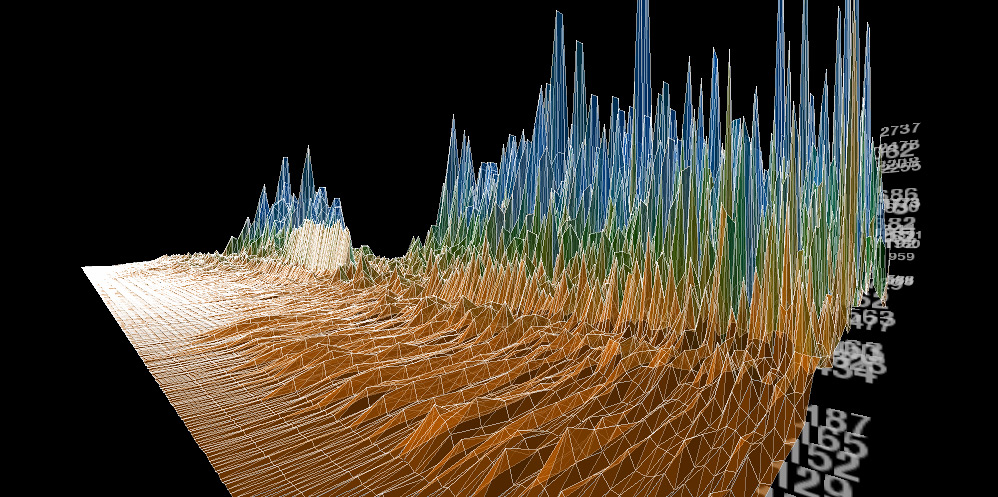

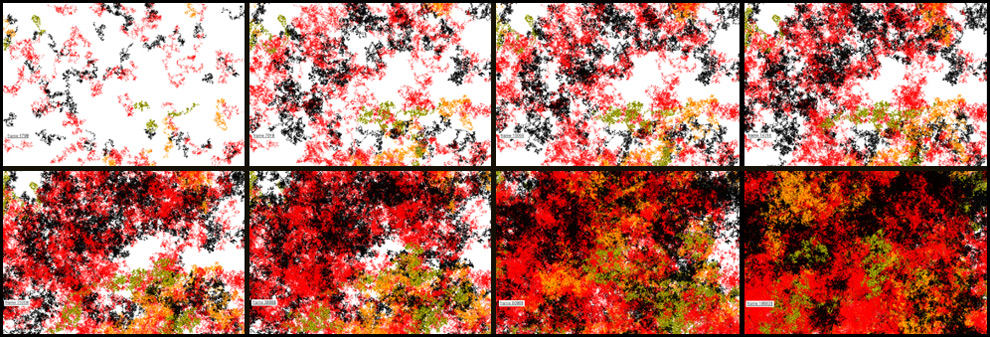

Building off of previous work that looked at real-time sound visualization, the intention of this exercise was to create a series of physical objects that legibly conveyed the transformation of sound into a landscape. Four specific indicative moments of recorded sound were rendered as a topographic form in Processing, then 3d printed. Any piece of real-time or recorded sound would work, however, these prototypes were chosen because they highlight special snippets or short moments during signature songs that could warrant further observation of the ordered or chaotic underlying sound structure. Once printed, each piece creates a striking object that allows for ease of visual comparison.

The four selections shown here include:

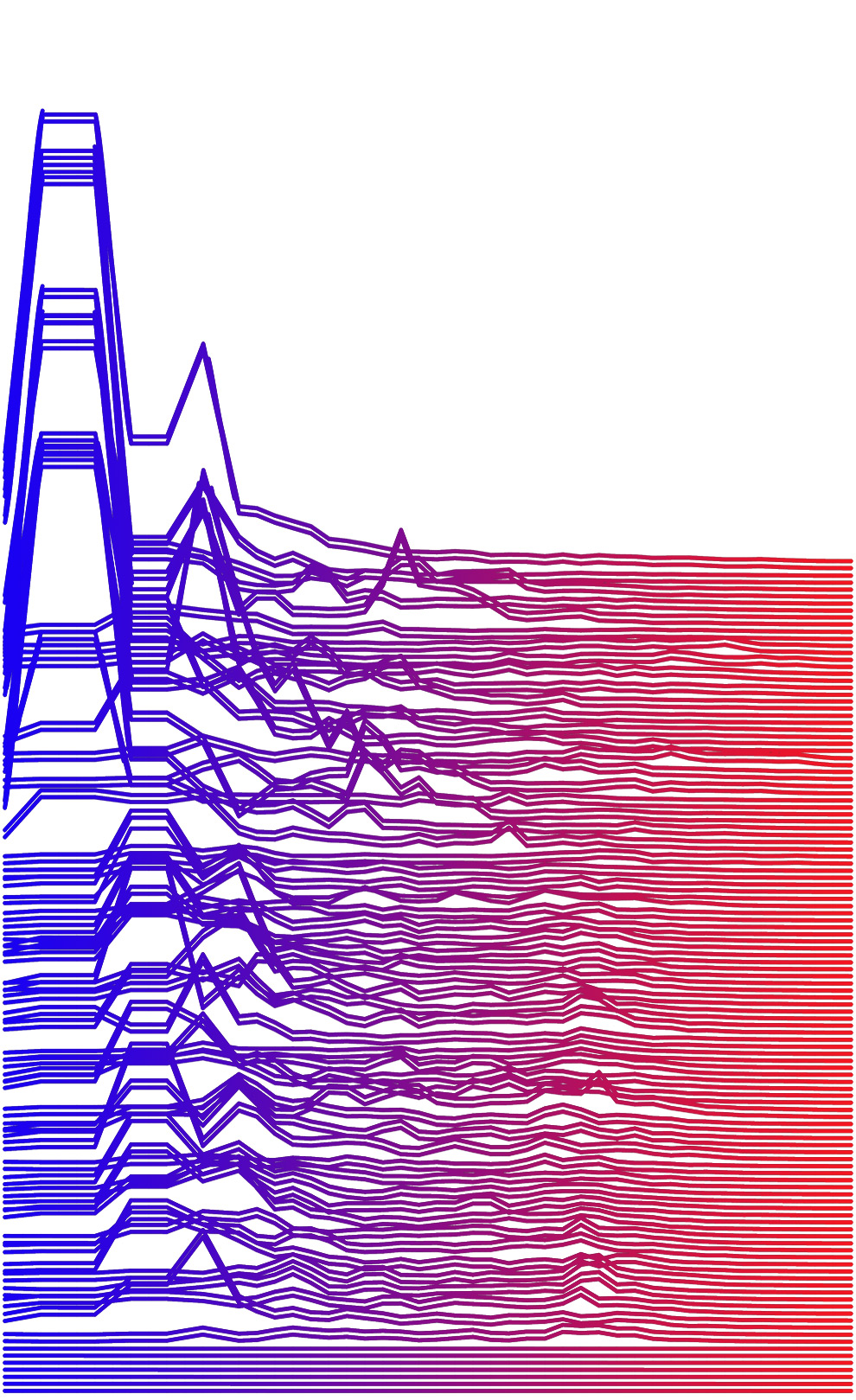

1) “Young Americans” – David Bowie. The brief pause at 4:19. (youtube link) Also, per Jennifer Egan in A Visit to the Goon Squad: “This is a lost opportunity. Hell, it would’ve been so easy to draw out the pause after ‘…break down and cry…’ to a full second, or 2, or 3, but Bowie must’ve chickened out for some reason.”

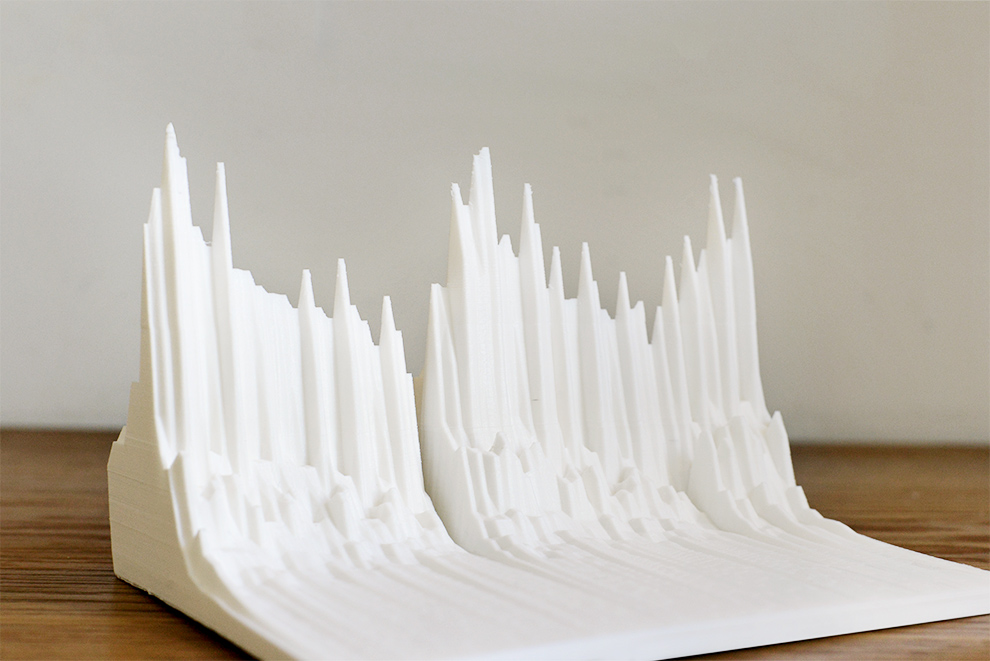

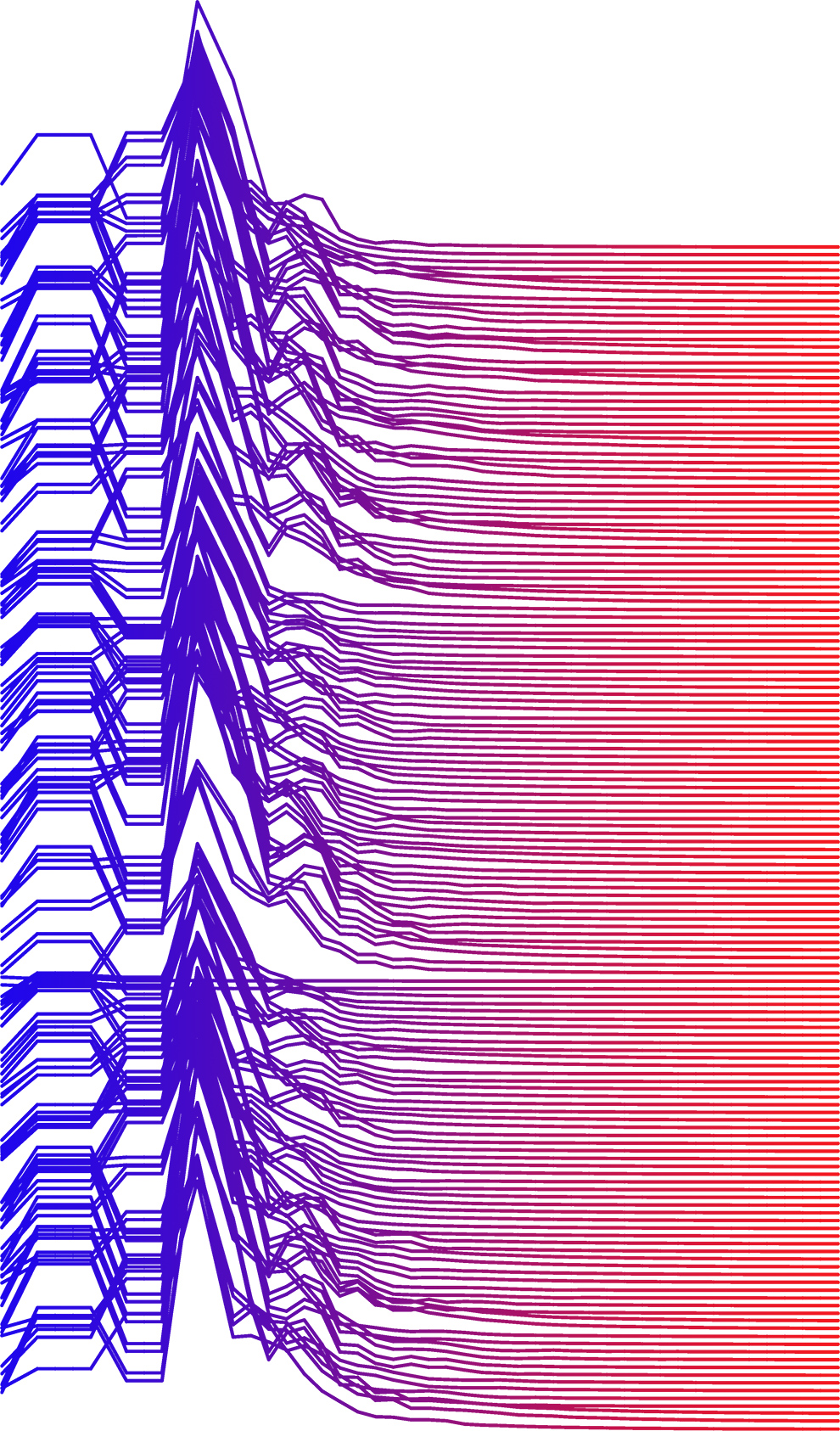

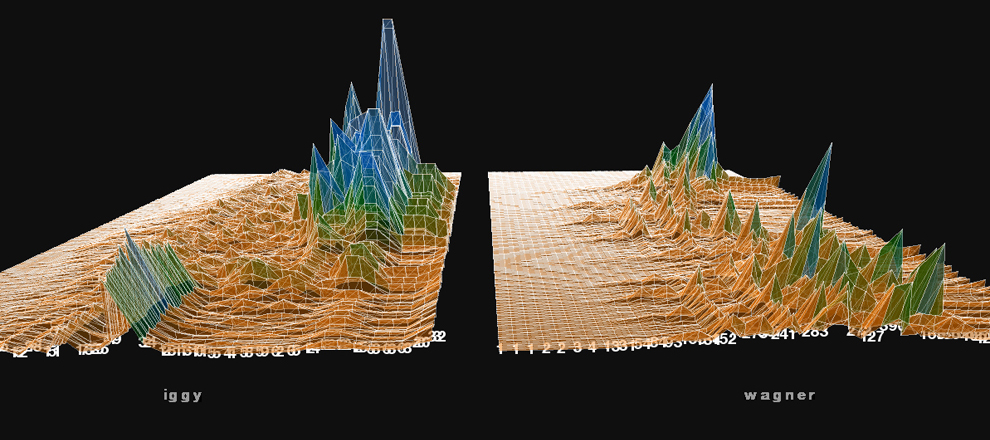

2) “Ride of the Valkyries” – Richard Wagner. The introduction of the main theme including the arrival of the brass instruments. (youtube link)

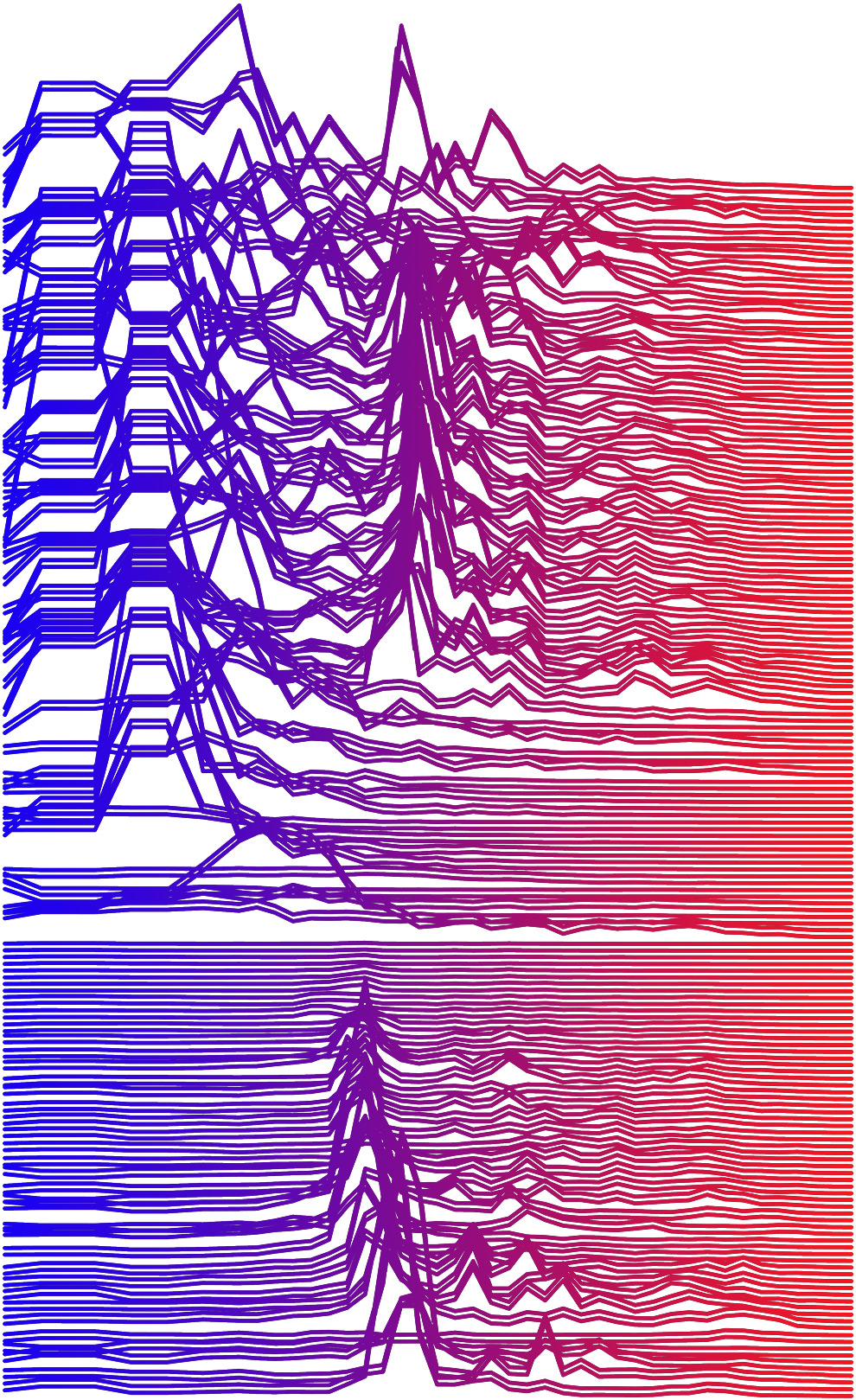

3) “Mood Indigo” – Duke Ellington. Jimmy Hamilton’s introduction on the clarinet. (youtube link)

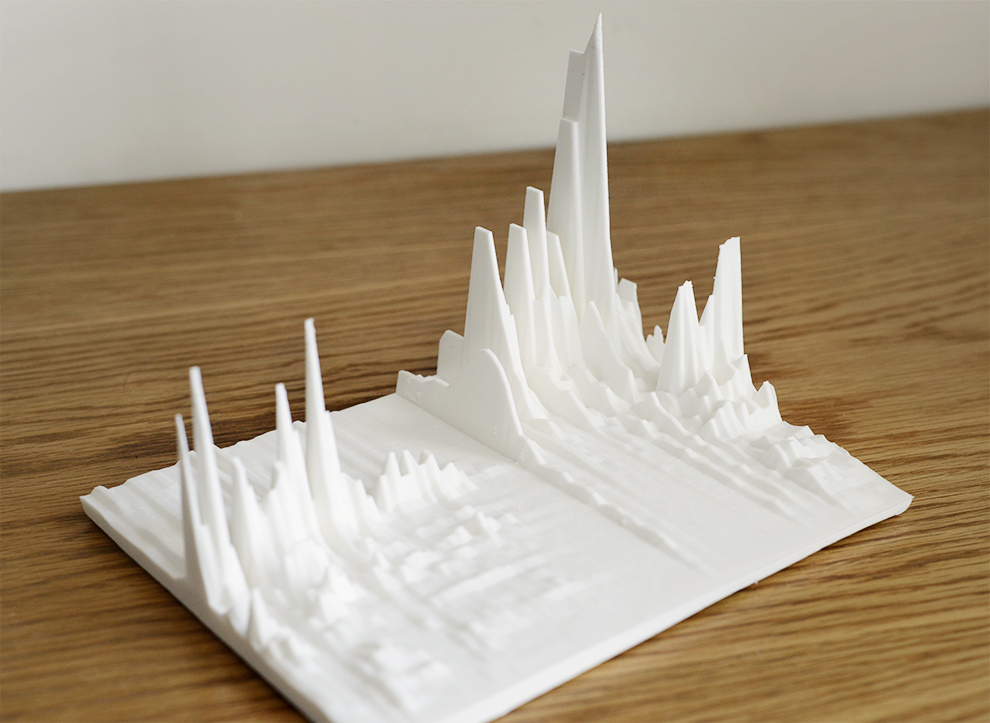

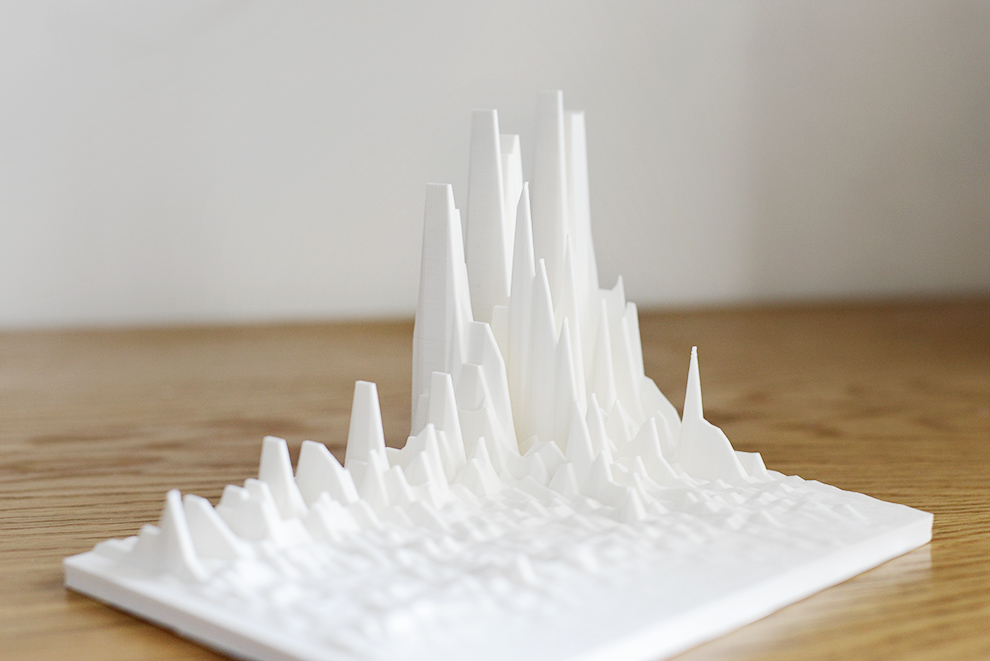

4) “Sonified Starlight” – NASA. Translation of light waves emanating from star KIC 7671081B into an audible pattern via NASA’s Kepler Input Catalog. (soundcloud link)

Lastly, drop me a line if you’d be interested in your own 3D printed soundwave.

“Sonified Starlight” – NASA. Translation of light waves emanating from star KIC 7671081B into an audible pattern via NASA’s Kepler Input Catalog. (soundcloud link)

“Young Americans” – David Bowie. The brief pause at 4:19. (youtube link)

“Ride of the Valkyries” – Richard Wagner. The introduction of the main theme including the arrival of the brass instruments. (youtube link)

“Mood Indigo” – Duke Ellington. Jimmy Hamilton’s introduction on the clarinet. (youtube link)